Introduction

In discussions about the future of modern computing, three terms frequently appear side by side yet are often confused with one another: Cloud Native, AI-Native Engineering, and AI-Native Architecture. They are sometimes used interchangeably as if they referred to the same thing, when in fact they operate at different layers and answer different questions.

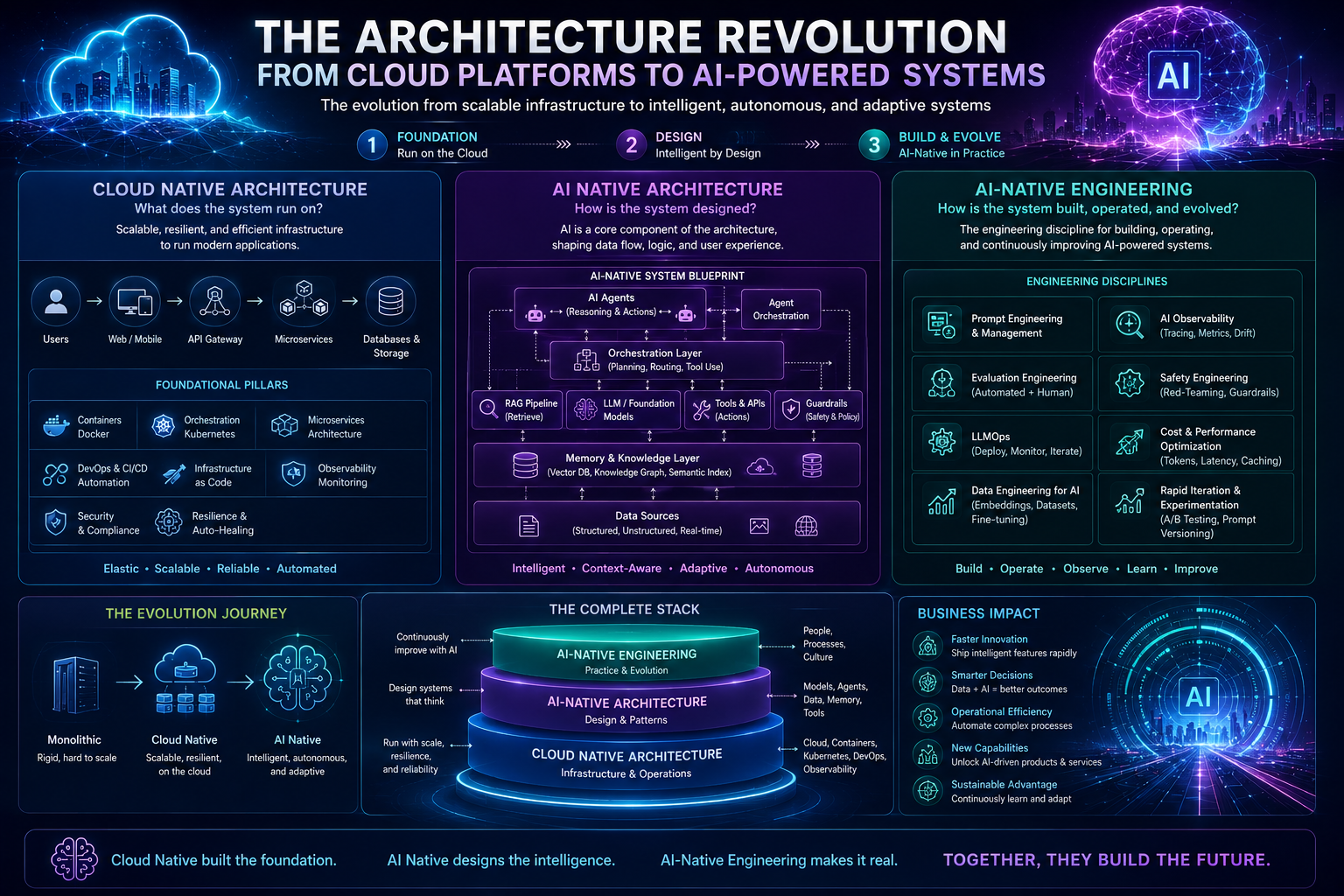

Cloud Native answers the question "what does this system run on?". AI-Native Architecture answers "how is this system designed?". AI-Native Engineering answers "how is this system built, operated, and evolved?".

These three are not competing concepts but complementary layers in a coherent stack. Understanding the relationship between them has become crucial for anyone designing modern systems in the era of large generative models. This article examines each concept individually, explores how they relate, and shows how they converge to form a new paradigm of software development.

Three Layers, Three Different Questions

To understand how the three relate, we need to see each as an answer to a different question in the system lifecycle.

Cloud Native: The Foundation Layer

Cloud Native is an infrastructure and operations paradigm born to take advantage of the elasticity, reliability, and scalability of cloud computing. The Cloud Native Computing Foundation (CNCF) defines it as an approach that leverages containers, microservices, infrastructure as code, declarative APIs, and immutable infrastructure.

Its key characteristics include:

Containerization as a portable unit of deployment

Orchestration through Kubernetes or similar platforms

Microservices that are loosely coupled

DevOps and CI/CD for rapid release cycles

Observability for distributed monitoring

Resilience through redundancy and self-healing

Cloud Native is the answer to the challenges of scale and release velocity in modern software. It provides a foundation that is elastic, repeatable, and declaratively managed.

AI-Native Architecture: The Design Layer

AI-Native Architecture is a system design pattern in which AI models are not bolted-on features but central components that shape the entire data flow, business logic, and user experience. It answers questions about the shape of the system.

An AI-native architecture typically includes:

Model-as-a-component: AI models as first-class components rather than external black boxes

Retrieval-Augmented Generation (RAG): separation between static knowledge (the model) and dynamic knowledge (vector databases, knowledge graphs)

Agentic patterns: agents that can choose tools, take actions, and plan multi-step tasks

Semantic data layer: data representations that models can understand (embeddings, ontologies)

Probabilistic flow control: business logic that accepts uncertainty as a natural input

Memory systems: short-term and long-term memory layers to maintain user context

Guardrails and validation: safety and quality layers that filter inputs and outputs

AI-native architecture is not simply "adding the ChatGPT API to an existing app." It is a new way of thinking about how data, models, and users interact.

AI-Native Engineering: The Practice Layer

If architecture is the blueprint, engineering is the practice of building, operating, and evolving that blueprint into a real system. AI-Native Engineering is the discipline of practices and engineering culture for AI-centric systems.

Its components include:

Prompt engineering and management: prompts as versioned artifacts that must be tested and deployed

Evaluation engineering: automated and human-in-the-loop evaluation methods for probabilistic outputs

LLMOps: pipelines for deploying, monitoring, and iterating on language models

Data engineering for AI: pipelines for embeddings, evaluation datasets, and fine-tuning data

Cost and performance engineering: optimizing cost per token, TTFT/TPOT latency, and strategic caching

Safety engineering: red-teaming, bias evaluation, hallucination audits

AI observability: prompt tracing, quality metrics, drift detection

Rapid iteration: A/B testing for model behavior, experiments with prompt changes

AI-Native Engineering is an evolution of DevOps and MLOps, but with unique challenges: outputs are non-deterministic, behavior can change when models are updated even if code is not, and quality is hard to define in traditional unit tests.

Hierarchical Relationship: Foundation, Blueprint, and Construction

The most intuitive way to understand the relationship is through the analogy of building construction.

┌─────────────────────────────────────────────────────┐

│ AI-Native Engineering │

│ (Practice of building, operating, evolving) │

├─────────────────────────────────────────────────────┤

│ AI-Native Architecture │

│ (Design and patterns of AI-centric systems) │

├─────────────────────────────────────────────────────┤

│ Cloud Native │

│ (Infrastructure, runtime, operations base) │

└─────────────────────────────────────────────────────┘Cloud Native is the ground and foundation: it provides the bedrock on which everything else stands. Without a solid foundation, AI systems cannot be reliably scaled, monitored, or deployed.

AI-Native Architecture is the building blueprint: it determines shape, space, and flow. It decides where the model lives, how data flows, how users interact, and how components connect to one another.

AI-Native Engineering is the process of construction and maintenance: the discipline that turns blueprints into real structures and then maintains, repairs, and extends them over time.

The three are inseparable. A brilliant AI-native blueprint will fail if it sits on a weak cloud-native foundation or is built with poor engineering practices. Conversely, the best engineering practices cannot rescue a poorly designed architecture.

How Cloud Native Enables AI-Native

Cloud Native provides capabilities that have become prerequisites for modern AI systems:

Elasticity for heterogeneous hardware. Kubernetes has evolved from a CPU scheduler into a platform that manages GPUs, TPUs, and specialized accelerators. Projects such as the NVIDIA GPU Operator, Kueue, and Volcano extend Kubernetes for AI workloads.

Declarative and reproducible. Infrastructure as Code lets AI teams define training and inference environments that can be reproduced exactly, a critical requirement when model results depend on highly detailed configurations.

Distributed observability. Modern AI systems are networks of components: vector stores, retrieval services, model serving, guardrails, and caching layers. Cloud-native tooling like OpenTelemetry, Prometheus, and Jaeger can be extended to trace these flows.

CI/CD for models and prompts. Cloud-native pipelines become the backbone for gradual deployment of models and prompts, complete with canary releases and automated rollbacks.

Without cloud-native, AI-native devolves into a collection of artisanal scripts that cannot be scaled to production.

How AI-Native Architecture Depends on Engineering

AI-native architecture introduces new patterns that require corresponding engineering practices. A few concrete examples:

RAG demands serious data engineering. Retrieval quality determines generation quality. This means engineering teams must manage embedding pipelines, recall and precision evaluation, chunking strategies, and periodic data refresh.

AI agents demand evaluation engineering. An agent that can call tools and take multi-step actions has a vast state space. Engineering must define success metrics, build benchmark datasets, and run automated evaluations on every change.

Probabilistic flow control demands new observability. When a single step in a workflow can produce different outputs for the same input, traditional debugging is not enough. Engineering teams must implement semantic tracing that captures inputs, outputs, and model reasoning.

Guardrails demand continuous safety engineering. Content filters, prompt injection detection, and output validation are not components installed once. They are living systems that require regular red-teaming and updates against new threats.

In short, AI-native architecture surfaces a class of engineering problems that simply did not exist in the pure cloud-native world.

The Collaborative Cycle Among the Three

In real-world practice, these three layers are not static; they form continuous feedback loops.

From Engineering to Architecture. Insights from production often force architectural changes. For example, an engineering team might discover that inference costs are too high because prompts are too long. The solution is not low-level optimization but introducing semantic caching or separating prefill-decode paths, an architectural decision.

From Architecture to Cloud Native. New architectural patterns drive the evolution of the cloud-native platform. When RAG becomes standard, vector databases become a first-class need. When agents become common, runtimes for safe tool execution become critical infrastructure. Cloud-native absorbs these patterns and elevates them into platform services.

From Cloud Native to Engineering. New capabilities at the platform layer enable new engineering practices. For example, native Kubernetes support for multi-instance GPUs allows teams to run model A/B tests at lower cost, which in turn changes how teams evaluate changes.

In other words, the three layers continuously shape one another in an ongoing evolutionary cycle.

Integration Challenges

Integrating the three layers smoothly is far from trivial. Several major challenges:

Boundary blur. The line between "infrastructure" and "application" becomes fuzzy. Is the vector database a platform component (cloud-native) or an application architecture component (AI-native)? The answer depends on organizational context, and that decision impacts who owns and operates the component.

Skill gap. Cloud-native teams expert in Kubernetes do not necessarily understand the nuances of LLM serving. ML teams expert in model training do not necessarily understand best practices for distributed deployment. AI-native engineering demands a rare blend of skills.

Tooling fragmentation. The AI-native ecosystem moves at extreme speed with many overlapping tools. Choosing the right stack and maintaining consistency across teams is a continuous challenge.

Cost visibility. GPU costs are far higher than CPU costs, and cost per token can vary dramatically by usage pattern. Teams need more granular cost visibility than what cloud-native platforms typically provide.

Probabilistic quality. There is no equivalent of "100% test coverage" for AI systems. Teams must accept that quality is a distribution, not a binary, and build processes to manage that distribution.

A Convergence Pattern: Toward the AI-Native Platform

The emerging trend today is the convergence of all three layers into what is often called an AI-Native Platform or AI Engineering Platform. Its characteristics include:

AI-aware Kubernetes: an orchestrator that natively understands GPUs, models, and inference workloads

Model serving as a platform service: not a task for application teams, but provided by the platform

Vector stores and retrieval as managed services: at parity with traditional databases

Integrated evaluation frameworks: built into the CI/CD pipeline

LLM observability: prompt tracing, quality metrics, and drift detection as standard

Cost governance: visibility into cost per request, per user, per feature

Such a platform allows application teams to focus on business logic and user experience, while low-level complexity around model serving, GPU management, and distributed observability is abstracted by the platform.

This is the same natural evolution that cloud-native went through two decades ago: from manual ops, to virtualization, to containerization, to full orchestration platforms. AI-native will follow the same trajectory, only at a much higher velocity.

Practical Implications for Organizations

For organizations seeking to adopt this paradigm, several practical recommendations:

Don't skip cloud-native. Without a mature cloud-native foundation, AI-native efforts will be hobbled by basic operational issues. Investment in Kubernetes, observability, and CI/CD is a prerequisite.

Separate architecture decisions from tooling decisions. Choose architectural patterns (RAG, agentic, hybrid) based on domain needs, not based on what tools are trendy. Tools will change; architectural patterns are more durable.

Build evaluation practices from day one. AI-native engineering without systematic evaluation is like flying without instruments. Investment in evaluation datasets and evaluation automation is as important as investment in the model itself.

Define platform ownership. Identify the team responsible for the AI-native platform layer and distinguish it from application teams. Without this separation, every team will rebuild the same capabilities in isolation.

Invest in cross-disciplinary skills. The AI-native engineers of the future will need to understand distributed systems, machine learning, and the business domain simultaneously. Training and team rotation become essential.

Conclusion

Cloud Native, AI-Native Architecture, and AI-Native Engineering are not competing concepts but three layers of a larger paradigm for building modern intelligent systems.

Cloud Native is the foundation: the elastic, managed infrastructure that makes everything above possible. AI-Native Architecture is the blueprint: the design pattern that places AI models at the core of the system rather than as an accessory. AI-Native Engineering is the practice discipline: the way to build, operate, and evolve that system in the real world while accepting its probabilistic nature.

Organizations that succeed in this era are not those that master a single layer, but those that understand how all three shape and collaborate with one another. They treat cloud-native as a mature prerequisite, AI-native architecture as a careful strategic decision, and AI-native engineering as a continuously evolving culture.

The era of large generative models does not replace cloud-native; it builds on top of it. But to truly leverage these new capabilities, we need new ways of thinking about architecture and new practices for engineering. Together, the three form the basis for the next wave of intelligent applications, applications that will define the coming decade in computing.