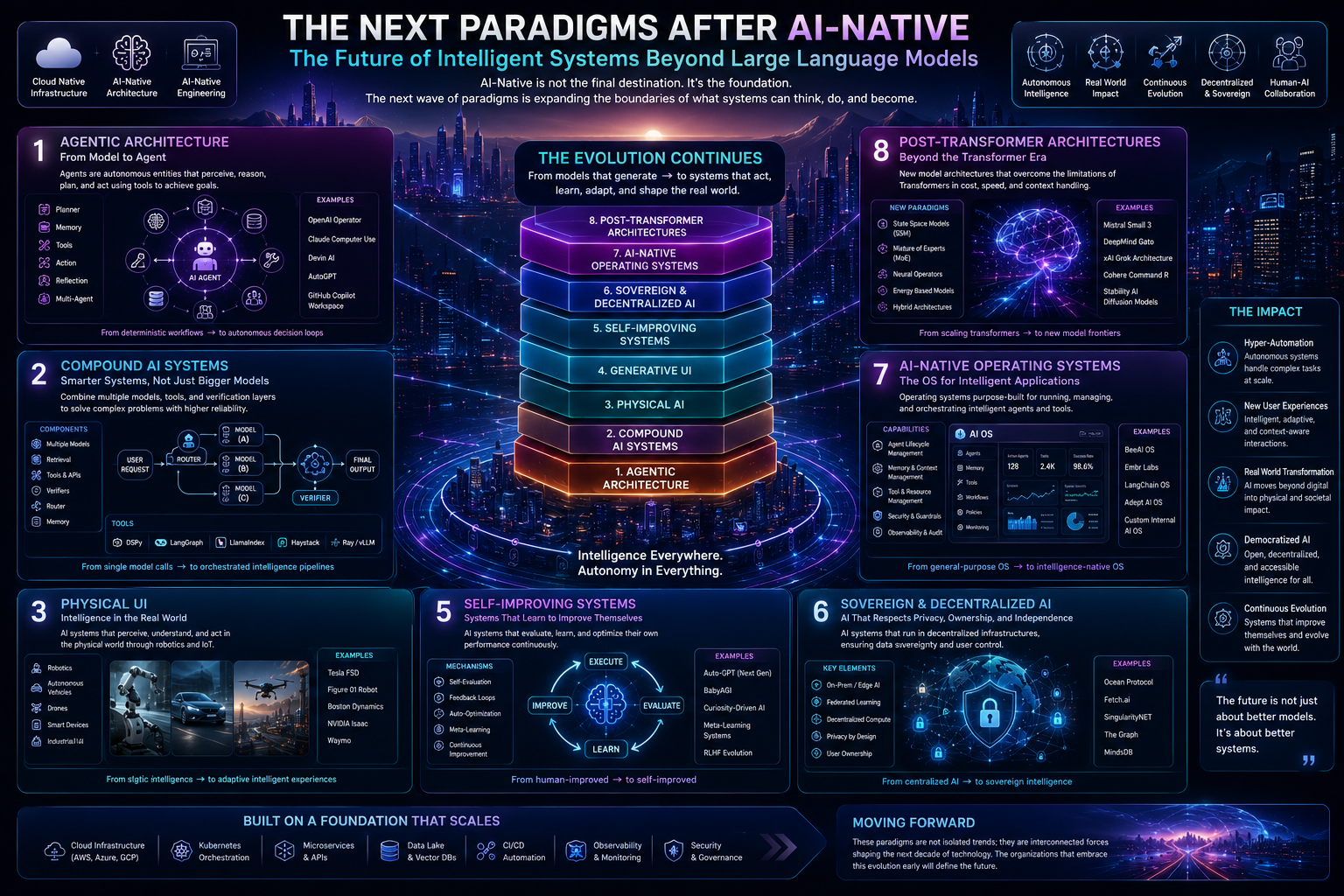

The technology industry spent the last few years focused on the transition from Cloud-Native to AI-Native systems. Yet while many organizations are only beginning their AI-Native journey, the broader landscape is already evolving beyond it. New paradigms are emerging that expand the boundaries of intelligent systems far beyond simply placing AI models at the center of applications.

These paradigms do not replace AI-Native Architecture. Instead, they extend it — adding new layers, expanding into new domains, and redefining the fundamental unit of computation itself. Understanding these shifts is becoming increasingly important for organizations that want to remain competitive in the next wave of technological transformation.

This emerging era is being shaped by several interconnected paradigms:

Agentic Architecture

Compound AI Systems

Physical AI

Generative UI

Self-Improving Systems

Sovereign & Decentralized AI

AI-Native Operating Systems

Post-Transformer Architectures

Together, these trends represent the evolution of computing beyond traditional software and even beyond today’s Large Language Models.

Agentic Architecture: From Models to Autonomous Agents

One of the most significant shifts is the transition from AI models toward autonomous agents.

Traditional AI systems behave like functions:

input a prompt, receive an output.

Agentic systems work differently. Agents possess:

goals,

memory,

planning capabilities,

tool access,

and the ability to take actions autonomously.

Instead of merely responding, agents can:

decide what needs to be done,

execute multi-step workflows,

observe outcomes,

and adapt their strategy dynamically.

This changes software architecture fundamentally. Applications evolve from deterministic workflows with occasional AI calls into autonomous decision loops driven by intelligent agents.

Modern agentic systems often include:

planners,

memory systems,

execution runtimes,

reflection mechanisms,

and multi-agent collaboration layers.

Emerging standards such as:

Anthropic’s Model Context Protocol (MCP),

Google’s Agent2Agent (A2A),

and OpenAI’s Agents SDK

are beginning to define interoperability between agents and tools.

Compound AI Systems: Smarter Systems, Not Just Bigger Models

Another major trend is the rise of Compound AI Systems.

Rather than relying on a single massive model, these systems combine multiple components:

specialized models,

retrieval systems,

reasoning loops,

external tools,

validators,

and routing mechanisms.

The core idea is simple:

future AI progress may come less from larger models and more from intelligent orchestration.

In practice, compound systems can:

route simple tasks to lightweight models,

reserve expensive reasoning models for complex problems,

validate outputs automatically,

and improve reliability through redundancy.

This approach dramatically changes the economics of AI systems while also improving quality and resilience.

Frameworks such as:

DSPy,

LangGraph,

LlamaIndex,

Haystack,

Ray,

and vLLM

are becoming key building blocks for these architectures.

Physical AI: Bringing Intelligence Into the Real World

For years, AI was primarily confined to screens, text, and images.

Physical AI — also called Embodied AI — expands intelligence into the physical world:

robotics,

autonomous vehicles,

drones,

IoT devices,

and cyber-physical systems.

Unlike purely digital systems, physical environments introduce:

real-time latency constraints,

unpredictable conditions,

sensor fusion challenges,

and physical safety risks.

One of the most important developments in this area is the emergence of world models — AI systems capable of learning internal simulations of how the physical world behaves.

Projects such as:

NVIDIA Cosmos,

DeepMind Genie,

Meta V-JEPA,

NVIDIA GR00T,

Tesla Optimus,

and RT-2

represent early steps toward general-purpose embodied intelligence.

This marks the beginning of AI moving beyond digital reasoning into real-world interaction.

Generative UI: Interfaces That Build Themselves

Traditional user interfaces are static.

Designers create screens, developers implement them, and users navigate fixed interaction patterns.

Generative UI changes this completely.

Instead of predefined layouts, AI dynamically generates interfaces based on:

user intent,

context,

goals,

and real-time data.

For example, instead of opening a travel app with hundreds of filters, a user could simply describe:

“Plan a family vacation to Bali in July.”

The system would then generate:

interactive maps,

personalized recommendation cards,

dynamic controls,

and contextual workflows tailored specifically for that task.

Platforms such as:

Vercel v0,

Claude Artifacts,

OpenAI Canvas,

and Apple Intelligence

are early examples of this transition.

Generative UI represents a future where interfaces are no longer manually designed for average users, but dynamically generated for each individual interaction.

Self-Improving Systems: AI That Evolves Itself

One of the most ambitious directions in AI research is the development of systems capable of improving themselves autonomously.

These systems aim to:

write code,

run experiments,

evaluate outcomes,

optimize architectures,

and evolve continuously with minimal human intervention.

Projects such as:

AlphaEvolve,

Sakana AI Scientist,

AutoML systems,

and self-play reasoning models

demonstrate early forms of this capability.

Today, practical implementations mainly focus on:

automatic optimization,

prompt tuning,

and architecture exploration.

But long-term research is moving toward recursive self-improvement — systems that can fundamentally enhance their own capabilities.

This introduces enormous architectural and safety challenges:

version lineage tracking,

resource management,

kill switches,

and continuous evaluation frameworks.

Sovereign & Decentralized AI

As AI infrastructure becomes increasingly centralized around a small number of hyperscalers, concerns about:

sovereignty,

privacy,

data ownership,

and geopolitical dependency

are growing rapidly.

This has led to two parallel trends:

Sovereign AI

Countries and enterprises deploy their own foundation models within private infrastructure and jurisdictional boundaries.

Decentralized AI

Training and inference are distributed across peer-to-peer networks using decentralized infrastructure models.

Technologies enabling this shift include:

federated learning,

confidential computing,

open-weight models,

secure multi-party computation,

and edge AI accelerators.

Future AI systems may increasingly operate across:

hybrid cloud,

edge infrastructure,

sovereign regions,

and decentralized compute networks simultaneously.

AI-Native Operating Systems

Another emerging idea is the concept of AI-Native Operating Systems.

Traditional operating systems were designed long before modern AI existed. AI-Native OS concepts propose a future where AI becomes a core primitive of the operating system itself rather than just another application.

This vision includes:

persistent contextual memory,

intent-driven interaction,

AI orchestration at the OS layer,

tool registries,

and agentic system shells.

Early signs of this direction can already be seen in:

Apple Intelligence,

Microsoft Copilot+ PCs,

Android with Gemini Nano,

and various experimental AI-first devices.

In this future, users may no longer “open apps.”

Instead, they interact with intelligent systems capable of coordinating applications autonomously.

Post-Transformer Architectures

Transformer models have dominated AI since 2017, but they also face scaling limitations:

quadratic attention complexity,

growing memory requirements,

and expensive inference costs.

This is driving research into alternative architectures such as:

State Space Models (SSM),

Mamba,

RWKV,

Hyena,

Liquid Neural Networks,

Diffusion Language Models,

and xLSTM.

If these architectures mature, they could enable:

far longer context windows,

dramatically lower inference costs,

smaller yet more capable models,

and efficient edge deployment.

This may fundamentally reshape AI infrastructure over the coming decade.

The Convergence of Paradigms

What makes this moment particularly important is that these paradigms are not isolated trends.

They are deeply interconnected:

Agentic systems depend on Compound AI orchestration,

Physical AI uses agentic planning,

Generative UI relies on intelligent agents,

Self-Improving Systems build on compound architectures,

Sovereign AI pushes inference toward the edge,

and Post-Transformer models may enable local AI-Native operating systems.

Together, they form a broader evolution of computing itself.

Conclusion

AI-Native Architecture is not the final destination.

It is only one stage in a much larger technological transformation.

The next era of computing is moving toward:

autonomous agents,

intelligent orchestration,

adaptive interfaces,

embodied intelligence,

decentralized infrastructure,

and self-evolving systems.

The future of computing will not be defined by a single model or platform.

It will be defined by intelligent ecosystems capable of:

reasoning,

learning,

collaborating,

adapting,

and continuously improving themselves.

The AI era is no longer just about models.

It is becoming an era of intelligent systems.